前提

电脑手机在同一wifi下,Python,Opencv库

app准备:DroidCam https://www.dev47apps.com/

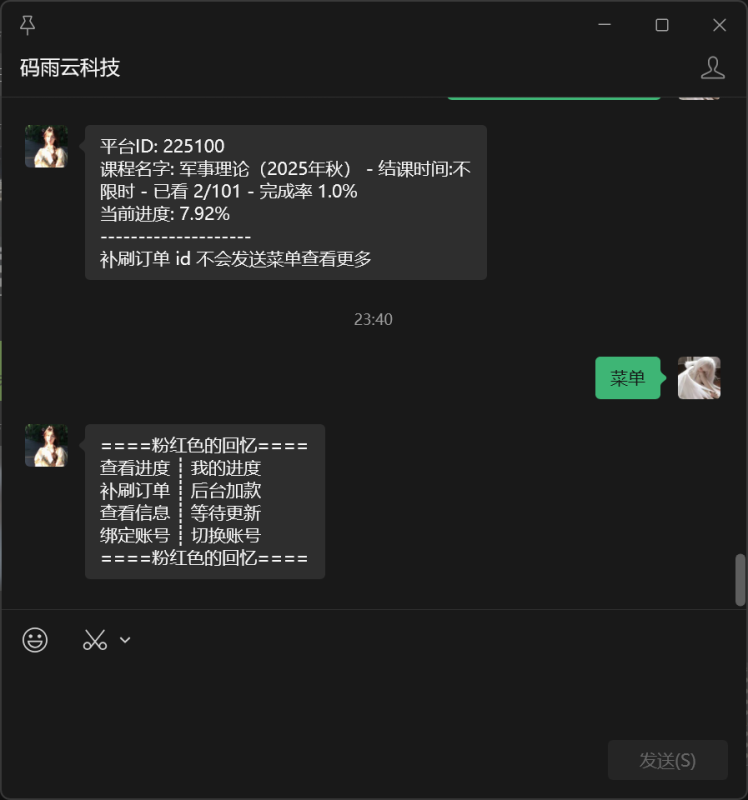

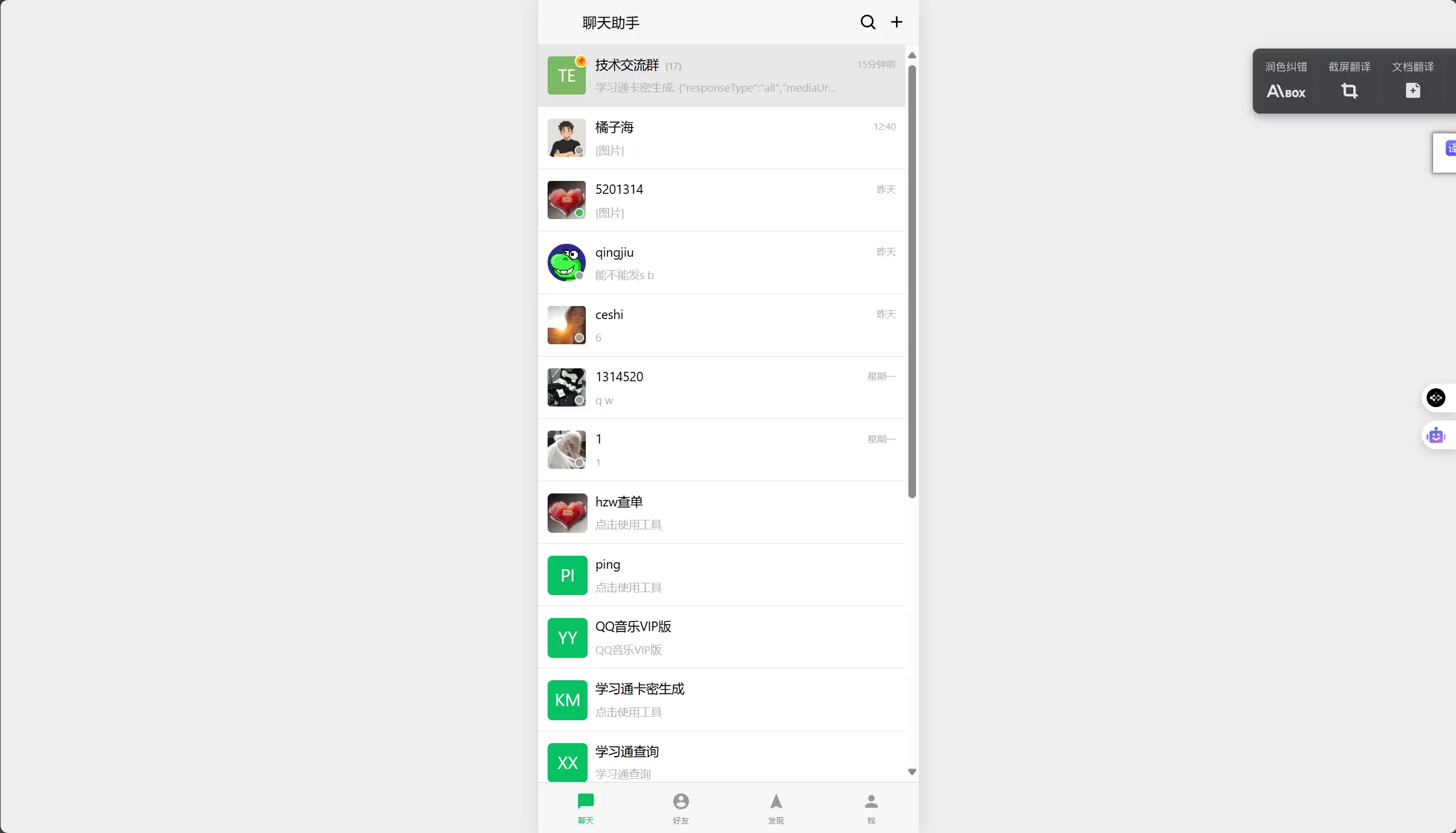

下载之后打开app简要看一下说明,然后进入到app主界面,可以看到如下场景

![图片[1]-Opencv2+DroidCam实现Python访问手机摄像头手机当监控终端](https://www.aineishe.com/wp-content/uploads/2025/06/image-1.png)

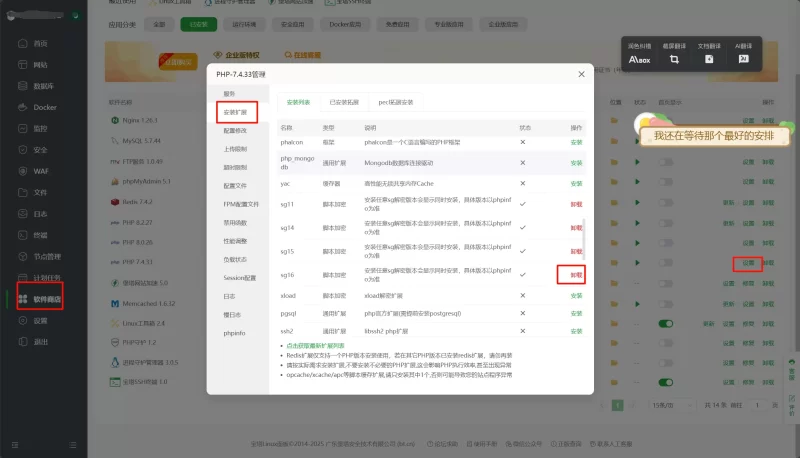

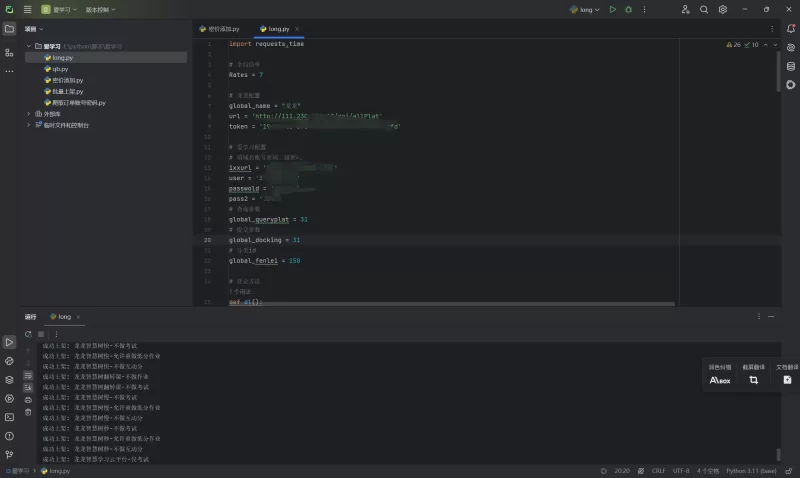

在浏览器中输入这两个网址之一便可以访问到手机摄像头的录像画面了

![图片[2]-Opencv2+DroidCam实现Python访问手机摄像头手机当监控终端](https://www.aineishe.com/wp-content/uploads/2025/06/image-2.png)

底下那个多一个video后缀的网址打开来之后只有一个视频界面

python调用代码

# -*- coding: utf-8 -*-

import cv2

import numpy as np

import time

import os

import subprocess

import sys

import math

from collections import deque

class FallDetectionSystem:

def __init__(self):

# 检查并安装依赖

self.install_dependencies()

# 初始化 DroidCam 视频流

self.droidcam_url = 'http://192.168.137.175:4747/video'

self.cap = cv2.VideoCapture(self.droidcam_url)

# 检查视频流是否成功打开

if not self.cap.isOpened():

print(f"错误:无法连接到 DroidCam 视频流 ({self.droidcam_url})")

print("请检查:1. DroidCam 是否运行 2. IP/端口是否正确 3. 网络连接")

return

# 设置视频分辨率

self.cap.set(cv2.CAP_PROP_FRAME_WIDTH, 640)

self.cap.set(cv2.CAP_PROP_FRAME_HEIGHT, 480)

# 获取实际分辨率

width = int(self.cap.get(cv2.CAP_PROP_FRAME_WIDTH))

height = int(self.cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

print(f"视频流分辨率: {width}x{height}")

# 加载检测器

self.face_cascade = cv2.CascadeClassifier(cv2.data.haarcascades + 'haarcascade_frontalface_default.xml')

self.smile_cascade = cv2.CascadeClassifier(cv2.data.haarcascades + 'haarcascade_smile.xml')

# 情绪颜色映射

self.emotion_colors = {

'Happy': (0, 255, 0),

'Neutral': (128, 128, 128),

'Unknown': (255, 255, 255)

}

# 性能统计

self.fps_counter = 0

self.start_time = time.time()

# 统计数据

self.total_faces_detected = 0

self.emotion_stats = {'Happy': 0, 'Neutral': 0, 'Unknown': 0}

# 运动检测

self.background_subtractor = cv2.createBackgroundSubtractorMOG2(detectShadows=True)

self.motion_threshold = 1000

# 摔倒检测相关

self.fall_detected = False

self.fall_alert_time = 0

self.fall_alert_duration = 3

self.fall_count = 0

self.last_fall_time = 0

self.fall_cooldown = 10

# 人体姿态分析

self.person_positions = []

self.position_history_size = 10

# 检测模式

self.detection_modes = ['All Detection', 'Face Only', 'Fall Detection Only']

self.current_mode = 0

# 左右晃手检测

self.hand_positions = deque(maxlen=20) # 存储最近20帧的手部中心点

self.wave_threshold = 50 # 水平移动阈值(像素)

self.wave_min_changes = 2 # 至少2次方向变化

self.wave_time_window = 1.0 # 时间窗口(秒)

def install_dependencies(self):

"""检查并安装必要的依赖包"""

required_packages = ['opencv-python', 'numpy']

for package in required_packages:

try:

__import__(package.replace('-', '_'))

except ImportError:

print(f"正在安装 {package}...")

try:

subprocess.check_call([sys.executable, '-m', 'pip', 'install', package])

print(f"✓ {package} 安装成功")

except subprocess.CalledProcessError:

print(f"✗ {package} 安装失败")

def detect_faces(self, frame):

"""检测人脸"""

gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY)

faces = self.face_cascade.detectMultiScale(

gray,

scaleFactor=1.1,

minNeighbors=5,

minSize=(30, 30)

)

return faces

def detect_emotion(self, frame, face_rect):

"""简单的情绪检测"""

x, y, w, h = face_rect

roi_gray = cv2.cvtColor(frame[y:y+h, x:x+w], cv2.COLOR_BGR2GRAY)

smiles = self.smile_cascade.detectMultiScale(roi_gray, 1.8, 20)

if len(smiles) > 0:

return 'Happy', 0.8

else:

return 'Neutral', 0.6

def detect_skin_color(self, frame):

"""检测肤色区域"""

hsv = cv2.cvtColor(frame, cv2.COLOR_BGR2HSV)

lower_skin = np.array([0, 20, 70], dtype=np.uint8)

upper_skin = np.array([20, 255, 255], dtype=np.uint8)

mask = cv2.inRange(hsv, lower_skin, upper_skin)

kernel = np.ones((3, 3), np.uint8)

mask = cv2.morphologyEx(mask, cv2.MORPH_OPEN, kernel)

mask = cv2.morphologyEx(mask, cv2.MORPH_CLOSE, kernel)

return mask

def detect_thumbs_up(self, frame):

"""检测大拇指手势"""

skin_mask = self.detect_skin_color(frame)

contours, _ = cv2.findContours(skin_mask, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

thumbs_up_detected = False

thumbs_positions = []

for contour in contours:

if cv2.contourArea(contour) < 5000:

continue

x, y, w, h = cv2.boundingRect(contour)

if w < 30 or h < 60:

continue

aspect_ratio = float(h) / w if w > 0 else 0

if aspect_ratio < 1.8 or aspect_ratio > 4.0:

continue

if y > frame.shape[0] * 0.7:

continue

hull = cv2.convexHull(contour, returnPoints=False)

if len(hull) > 3:

defects = cv2.convexityDefects(contour, hull)

if defects is not None:

significant_defects = 0

for i in range(defects.shape[0]):

s, e, f, d = defects[i, 0]

if d > 12000:

significant_defects += 1

if significant_defects == 1:

area = cv2.contourArea(contour)

perimeter = cv2.arcLength(contour, True)

if perimeter > 0:

compactness = (perimeter * perimeter) / area

if 15 < compactness < 50:

thumbs_up_detected = True

thumbs_positions.append((x, y, w, h))

break

return thumbs_up_detected, thumbs_positions

def detect_hand_wave(self, frame):

"""检测左右晃手"""

skin_mask = self.detect_skin_color(frame)

contours, _ = cv2.findContours(skin_mask, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

wave_detected = False

wave_positions = []

for contour in contours:

area = cv2.contourArea(contour)

if area < 5000 or area > 50000: # 限制手部区域大小

continue

x, y, w, h = cv2.boundingRect(contour)

if w < 30 or h < 30 or w > h * 2: # 手部形状限制

continue

if y > frame.shape[0] * 0.7: # 不在画面底部

continue

# 计算手部中心点

center_x = x + w // 2

center_y = y + h // 2

current_time = time.time()

# 记录手部位置和时间

self.hand_positions.append((center_x, current_time))

# 分析最近的手部位置

if len(self.hand_positions) >= 5: # 至少需要5帧来判断晃动

# 筛选时间窗口内的位置

valid_positions = [(x, t) for x, t in self.hand_positions if current_time - t <= self.wave_time_window]

if len(valid_positions) >= 5:

x_coords = [x for x, _ in valid_positions]

# 计算水平移动范围

x_range = max(x_coords) - min(x_coords)

# 检测方向变化

direction_changes = 0

for i in range(1, len(x_coords) - 1):

if (x_coords[i] > x_coords[i-1] and x_coords[i] > x_coords[i+1]) or \

(x_coords[i] < x_coords[i-1] and x_coords[i] < x_coords[i+1]):

direction_changes += 1

if x_range > self.wave_threshold and direction_changes >= self.wave_min_changes:

wave_detected = True

wave_positions.append((x, y, w, h))

break

return wave_detected, wave_positions

def detect_motion_and_fall(self, frame):

"""检测运动和摔倒"""

fg_mask = self.background_subtractor.apply(frame)

kernel = np.ones((5, 5), np.uint8)

fg_mask = cv2.morphologyEx(fg_mask, cv2.MORPH_CLOSE, kernel)

fg_mask = cv2.morphologyEx(fg_mask, cv2.MORPH_OPEN, kernel)

contours, _ = cv2.findContours(fg_mask, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

motion_areas = []

fall_risk = False

for contour in contours:

if cv2.contourArea(contour) > self.motion_threshold:

x, y, w, h = cv2.boundingRect(contour)

motion_areas.append((x, y, w, h))

aspect_ratio = float(w) / h if h > 0 else 0

if aspect_ratio > 2.0 and y > frame.shape[0] * 0.6:

fall_risk = True

# 检测大拇指手势

thumbs_up, thumbs_positions = self.detect_thumbs_up(frame)

# 检测左右晃手

wave_detected, wave_positions = self.detect_hand_wave(frame)

current_time = time.time()

fall_trigger_type = None

# 处理大拇指手势触发

if thumbs_up and len(thumbs_positions) > 0 and (current_time - self.last_fall_time) > self.fall_cooldown:

valid_thumbs = False

for (x, y, w, h) in thumbs_positions:

if (w > 30 and h > 60 and y < frame.shape[0] * 0.7 and x > 50 and x < frame.shape[1] - 50):

valid_thumbs = True

break

if valid_thumbs:

fall_trigger_type = "Thumbs Up"

self.trigger_fall_alert(frame, fall_trigger_type)

self.last_fall_time = current_time

fall_risk = True

# 处理左右晃手触发

if wave_detected and len(wave_positions) > 0 and (current_time - self.last_fall_time) > self.fall_cooldown:

valid_wave = False

for (x, y, w, h) in wave_positions:

if (w > 30 and h > 30 and y < frame.shape[0] * 0.7 and x > 50 and x < frame.shape[1] - 50):

valid_wave = True

break

if valid_wave:

fall_trigger_type = "Hand Wave"

self.trigger_fall_alert(frame, fall_trigger_type)

self.last_fall_time = current_time

fall_risk = True

return motion_areas, fall_risk, thumbs_positions, fg_mask, wave_positions

def trigger_fall_alert(self, frame, trigger_type):

"""触发摔倒警报"""

self.fall_detected = True

self.fall_alert_time = time.time()

self.fall_count += 1

self.save_emergency_screenshot(frame, trigger_type)

print(f"🚨 摔倒警报!检测到紧急情况!(触发方式:{trigger_type})")

print(f"摔倒次数: {self.fall_count}")

def save_emergency_screenshot(self, frame, trigger_type):

"""保存紧急截图"""

save_dir = "D:/demoimg/emergency"

if not os.path.exists(save_dir):

os.makedirs(save_dir)

timestamp = time.strftime("%Y%m%d_%H%M%S")

filename = os.path.join(save_dir, f"EMERGENCY_FALL_{timestamp}.jpg")

emergency_frame = frame.copy()

cv2.rectangle(emergency_frame, (0, 0), (frame.shape[1]-1, frame.shape[0]-1), (0, 0, 255), 5)

cv2.putText(emergency_frame, "EMERGENCY - FALL DETECTED!", (50, 50),

cv2.FONT_HERSHEY_SIMPLEX, 1.0, (0, 0, 255), 3)

cv2.putText(emergency_frame, f"Time: {time.strftime('%Y-%m-%d %H:%M:%S')}", (50, 100),

cv2.FONT_HERSHEY_SIMPLEX, 0.8, (0, 0, 255), 2)

cv2.putText(emergency_frame, f"Fall Count: {self.fall_count}", (50, 150),

cv2.FONT_HERSHEY_SIMPLEX, 0.8, (0, 0, 255), 2)

cv2.putText(emergency_frame, f"Trigger: {trigger_type}", (50, 200),

cv2.FONT_HERSHEY_SIMPLEX, 0.8, (0, 0, 255), 2)

cv2.imwrite(filename, emergency_frame)

info_filename = os.path.join(save_dir, f"EMERGENCY_INFO_{timestamp}.txt")

with open(info_filename, 'w', encoding='utf-8') as f:

f.write("🚨 紧急情况报告 🚨\n")

f.write("=" * 30 + "\n")

f.write(f"检测时间: {time.strftime('%Y-%m-%d %H:%M:%S')}\n")

f.write(f"事件类型: 摔倒检测\n")

f.write(f"摔倒次数: {self.fall_count}\n")

f.write(f"检测方式: {trigger_type}\n")

f.write(f"图片文件: {filename}\n")

f.write("\n注意:这是模拟摔倒检测,实际使用大拇指手势或左右晃手触发\n")

print(f"🚨 紧急截图已保存: {filename}")

print(f"📄 紧急信息已保存: {info_filename}")

def draw_face_and_emotion(self, frame, faces):

"""绘制人脸边框和情绪"""

face_emotions = []

for i, (x, y, w, h) in enumerate(faces):

emotion, confidence = self.detect_emotion(frame, (x, y, w, h))

face_emotions.append(emotion)

color = self.emotion_colors.get(emotion, (255, 255, 255))

cv2.rectangle(frame, (x, y), (x + w, y + h), color, 2)

label = f'Face {i+1}: {emotion}'

cv2.putText(frame, label, (x, y - 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, color, 2)

center_x, center_y = x + w//2, y + h//2

self.draw_emotion_icon(frame, center_x, center_y, emotion)

self.emotion_stats[emotion] += 1

return frame, face_emotions

def draw_motion_and_fall(self, frame, motion_areas, fall_risk, thumbs_positions, wave_positions):

"""绘制运动检测和摔倒警报"""

for (x, y, w, h) in motion_areas:

color = (0, 0, 255) if fall_risk else (0, 255, 255)

cv2.rectangle(frame, (x, y), (x + w, y + h), color, 2)

label = 'Fall Risk!' if fall_risk else 'Motion'

cv2.putText(frame, label, (x, y - 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, color, 2)

for (x, y, w, h) in thumbs_positions:

cv2.rectangle(frame, (x, y), (x + w, y + h), (255, 0, 255), 2)

cv2.putText(frame, 'Thumbs Up - Fall Signal!', (x, y - 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, (255, 0, 255), 2)

for (x, y, w, h) in wave_positions:

cv2.rectangle(frame, (x, y), (x + w, y + h), (0, 255, 255), 2)

cv2.putText(frame, 'Wave Detected - Fall Signal!', (x, y - 10),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, (0, 255, 255), 2)

if self.fall_detected:

current_time = time.time()

if (current_time - self.fall_alert_time) < self.fall_alert_duration:

if int((current_time - self.fall_alert_time) * 4) % 2 == 0:

overlay = frame.copy()

cv2.rectangle(overlay, (0, 0), (frame.shape[1], frame.shape[0]), (0, 0, 255), -1)

cv2.addWeighted(overlay, 0.3, frame, 0.7, 0, frame)

cv2.putText(frame, "🚨 FALL DETECTED! 🚨", (frame.shape[1]//2 - 150, frame.shape[0]//2),

cv2.FONT_HERSHEY_SIMPLEX, 1.2, (0, 0, 255), 3)

else:

self.fall_detected = False

return frame

def draw_emotion_icon(self, frame, center_x, center_y, emotion):

"""绘制情绪图标"""

if emotion == 'Happy':

cv2.circle(frame, (center_x, center_y), 15, (0, 255, 0), 2)

cv2.circle(frame, (center_x-5, center_y-3), 2, (0, 255, 0), -1)

cv2.circle(frame, (center_x+5, center_y-3), 2, (0, 255, 0), -1)

cv2.ellipse(frame, (center_x, center_y+5), (8, 4), 0, 0, 180, (0, 255, 0), 2)

elif emotion == 'Neutral':

cv2.circle(frame, (center_x, center_y), 15, (128, 128, 128), 2)

cv2.circle(frame, (center_x-5, center_y-3), 2, (128, 128, 128), -1)

cv2.circle(frame, (center_x+5, center_y-3), 2, (128, 128, 128), -1)

cv2.line(frame, (center_x-6, center_y+6), (center_x+6, center_y+6), (128, 128, 128), 2)

def calculate_fps(self):

"""计算FPS"""

self.fps_counter += 1

elapsed_time = time.time() - self.start_time

if elapsed_time >= 1.0:

fps = self.fps_counter / elapsed_time

self.fps_counter = 0

self.start_time = time.time()

return fps

return None

def add_info_display(self, frame, faces, face_emotions, motion_areas, fall_risk, fps=None):

"""添加信息显示"""

overlay = frame.copy()

cv2.rectangle(overlay, (10, 10), (400, 220), (0, 0, 0), -1)

cv2.addWeighted(overlay, 0.7, frame, 0.3, 0, frame)

mode_text = f"Mode: {self.detection_modes[self.current_mode]}"

cv2.putText(frame, mode_text, (20, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, (255, 255, 0), 2)

face_count = len(faces)

motion_count = len(motion_areas)

cv2.putText(frame, f"Faces: {face_count}", (20, 55),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 255, 255), 1)

cv2.putText(frame, f"Motion Areas: {motion_count}", (20, 75),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 255, 255), 1)

fall_status = "HIGH RISK!" if fall_risk else "Normal"

fall_color = (0, 0, 255) if fall_risk else (0, 255, 0)

cv2.putText(frame, f"Fall Status: {fall_status}", (20, 95),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, fall_color, 2)

cv2.putText(frame, f"Fall Count: {self.fall_count}", (20, 115),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 255, 255), 1)

if fps is not None:

cv2.putText(frame, f"FPS: {fps:.1f}", (20, 135),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 255, 255), 1)

if face_emotions:

emotions_text = ", ".join(face_emotions)

cv2.putText(frame, f"Emotions: {emotions_text}", (20, 155),

cv2.FONT_HERSHEY_SIMPLEX, 0.4, (255, 255, 255), 1)

cv2.putText(frame, "SPACE: Switch Mode | q: Quit | s: Save", (20, 175),

cv2.FONT_HERSHEY_SIMPLEX, 0.4, (255, 255, 255), 1)

cv2.putText(frame, "Thumbs Up or Hand Wave = Fall Signal", (20, 195),

cv2.FONT_HERSHEY_SIMPLEX, 0.4, (255, 0, 255), 1)

cv2.putText(frame, "Debug: Thumbs-up & wave detection enabled", (20, 215),

cv2.FONT_HERSHEY_SIMPLEX, 0.3, (128, 128, 128), 1)

self.add_statistics_display(frame)

self.add_time_display(frame)

return frame

def add_statistics_display(self, frame):

"""添加统计显示"""

overlay = frame.copy()

cv2.rectangle(overlay, (frame.shape[1] - 220, 10), (frame.shape[1] - 10, 150), (0, 0, 0), -1)

cv2.addWeighted(overlay, 0.7, frame, 0.3, 0, frame)

cv2.putText(frame, "Fall Detection Stats:", (frame.shape[1] - 210, 30),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 255, 255), 1)

cv2.putText(frame, f"Total Falls: {self.fall_count}", (frame.shape[1] - 210, 55),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 0, 0), 1)

cv2.putText(frame, "Emotion Stats:", (frame.shape[1] - 210, 85),

cv2.FONT_HERSHEY_SIMPLEX, 0.5, (255, 255, 255), 1)

y_offset = 105

for emotion, count in self.emotion_stats.items():

if count > 0:

color = self.emotion_colors.get(emotion, (255, 255, 255))

text = f"{emotion}: {count}"

cv2.putText(frame, text, (frame.shape[1] - 210, y_offset),

cv2.FONT_HERSHEY_SIMPLEX, 0.4, color, 1)

y_offset += 20

def add_time_display(self, frame):

"""添加时间显示到右下角"""

current_time = time.strftime("%Y-%m-%d %H:%M:%S")

(text_width, text_height), _ = cv2.getTextSize(current_time, cv2.FONT_HERSHEY_SIMPLEX, 0.6, 2)

x = frame.shape[1] - text_width - 20

y = frame.shape[0] - 20

overlay = frame.copy()

cv2.rectangle(overlay, (x - 10, y - text_height - 10), (x + text_width + 10, y + 10), (0, 0, 0), -1)

cv2.addWeighted(overlay, 0.7, frame, 0.3, 0, frame)

cv2.putText(frame, current_time, (x, y),

cv2.FONT_HERSHEY_SIMPLEX, 0.6, (255, 255, 255), 2)

def save_screenshot(self, frame, faces, face_emotions, motion_areas, fall_risk):

"""保存普通截图和检测信息"""

save_dir = "D:/demoimg"

if not os.path.exists(save_dir):

os.makedirs(save_dir)

timestamp = time.strftime("%Y%m%d_%H%M%S")

filename = os.path.join(save_dir, f"fall_detection_{timestamp}.jpg")

cv2.imwrite(filename, frame)

info_filename = os.path.join(save_dir, f"detection_info_{timestamp}.txt")

with open(info_filename, 'w', encoding='utf-8') as f:

f.write(f"摔倒检测系统截图时间: {time.strftime('%Y-%m-%d %H:%M:%S')}\n")

f.write(f"检测模式: {self.detection_modes[self.current_mode]}\n\n")

f.write(f"检测到人脸数量: {len(faces)}\n")

for i, ((x, y, w, h), emotion) in enumerate(zip(faces, face_emotions)):

f.write(f"人脸 {i+1}: 位置({x}, {y}), 大小({w}x{h}), 情绪: {emotion}\n")

f.write(f"\n检测到运动区域数量: {len(motion_areas)}\n")

for i, (x, y, w, h) in enumerate(motion_areas):

f.write(f"运动区域 {i+1}: 位置({x}, {y}), 大小({w}x{h})\n")

f.write(f"\n摔倒风险状态: {'高风险' if fall_risk else '正常'}\n")

f.write(f"累计摔倒次数: {self.fall_count}\n")

f.write("\n情绪统计:\n")

for emotion, count in self.emotion_stats.items():

if count > 0:

f.write(f" {emotion}: {count}\n")

print(f"截图已保存: {filename}")

print(f"检测信息已保存: {info_filename}")

def run(self):

"""运行摔倒检测系统"""

print("=== 摔倒检测系统启动 ===")

print(f"视频源: {self.droidcam_url}")

print("功能:人脸检测 + 情绪识别 + 动作检测 + 摔倒警报")

print("🚨 摔倒模拟:竖起大拇指或左右晃手触发警报并自动截图")

print("按 SPACE 切换检测模式")

print("按 'q' 退出程序")

print("按 's' 保存普通截图")

print("========================")

current_fps = None

reconnect_attempts = 0

max_reconnect_attempts = 5

while True:

ret, frame = self.cap.read()

if not ret:

print("警告:无法读取 DroidCam 视频流")

reconnect_attempts += 1

if reconnect_attempts > max_reconnect_attempts:

print(f"错误:连续 {max_reconnect_attempts} 次无法连接,程序退出")

break

print(f"尝试重新连接 ({reconnect_attempts}/{max_reconnect_attempts})...")

self.cap.release()

self.cap = cv2.VideoCapture(self.droidcam_url)

if not self.cap.isOpened():

print("重新连接失败,继续尝试...")

time.sleep(1)

continue

print("重新连接成功!")

reconnect_attempts = 0

continue

reconnect_attempts = 0

frame = cv2.flip(frame, 1)

faces = []

face_emotions = []

motion_areas = []

fall_risk = False

thumbs_positions = []

wave_positions = []

current_mode = self.detection_modes[self.current_mode]

if current_mode in ['All Detection', 'Face Only']:

faces = self.detect_faces(frame)

frame, face_emotions = self.draw_face_and_emotion(frame, faces)

if current_mode in ['All Detection', 'Fall Detection Only']:

motion_areas, fall_risk, thumbs_positions, motion_mask, wave_positions = self.detect_motion_and_fall(frame)

frame = self.draw_motion_and_fall(frame, motion_areas, fall_risk, thumbs_positions, wave_positions)

fps = self.calculate_fps()

if fps is not None:

current_fps = fps

frame = self.add_info_display(frame, faces, face_emotions, motion_areas, fall_risk, current_fps)

cv2.imshow('Fall Detection System', frame)

key = cv2.waitKey(1) & 0xFF

if key == ord('q'):

print("正在退出程序...")

break

elif key == ord('s'):

self.save_screenshot(frame, faces, face_emotions, motion_areas, fall_risk)

elif key == ord(' '):

self.current_mode = (self.current_mode + 1) % len(self.detection_modes)

print(f"切换到模式: {self.detection_modes[self.current_mode]}")

self.cleanup()

def cleanup(self):

"""清理资源"""

self.cap.release()

cv2.destroyAllWindows()

print("视频流已关闭")

print(f"本次会话总共检测到 {self.total_faces_detected} 个人脸")

print(f"摔倒警报触发次数: {self.fall_count}")

if self.fall_count > 0:

print("⚠️ 请检查 D:/demoimg/emergency/ 文件夹中的紧急截图")

print("程序已退出")

if __name__ == "__main__":

detector = FallDetectionSystem()

detector.run()© 版权声明

文章版权归作者所有,未经允许请勿转载。

THE END

暂无评论内容